Rényi divergence as a function of P = (p, 1 − p) for Q = ( 1 /3, 2 /3) | Download Scientific Diagram

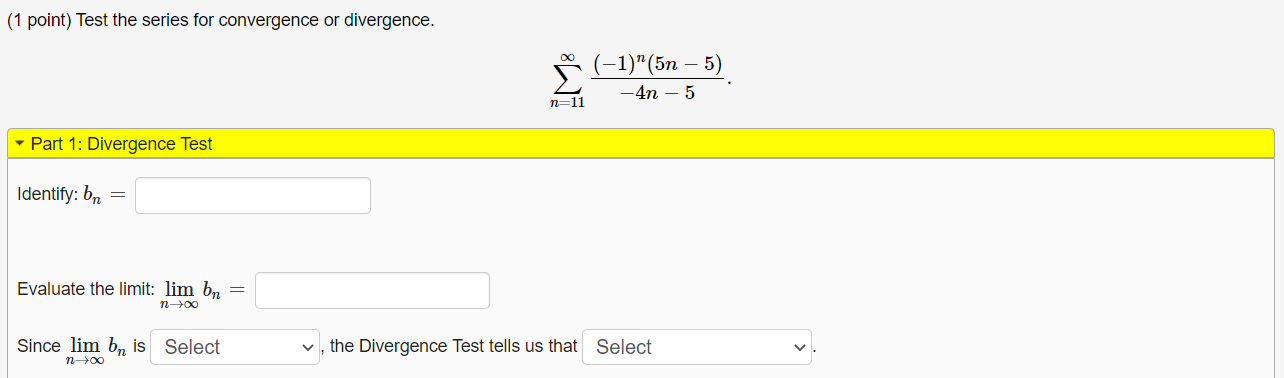

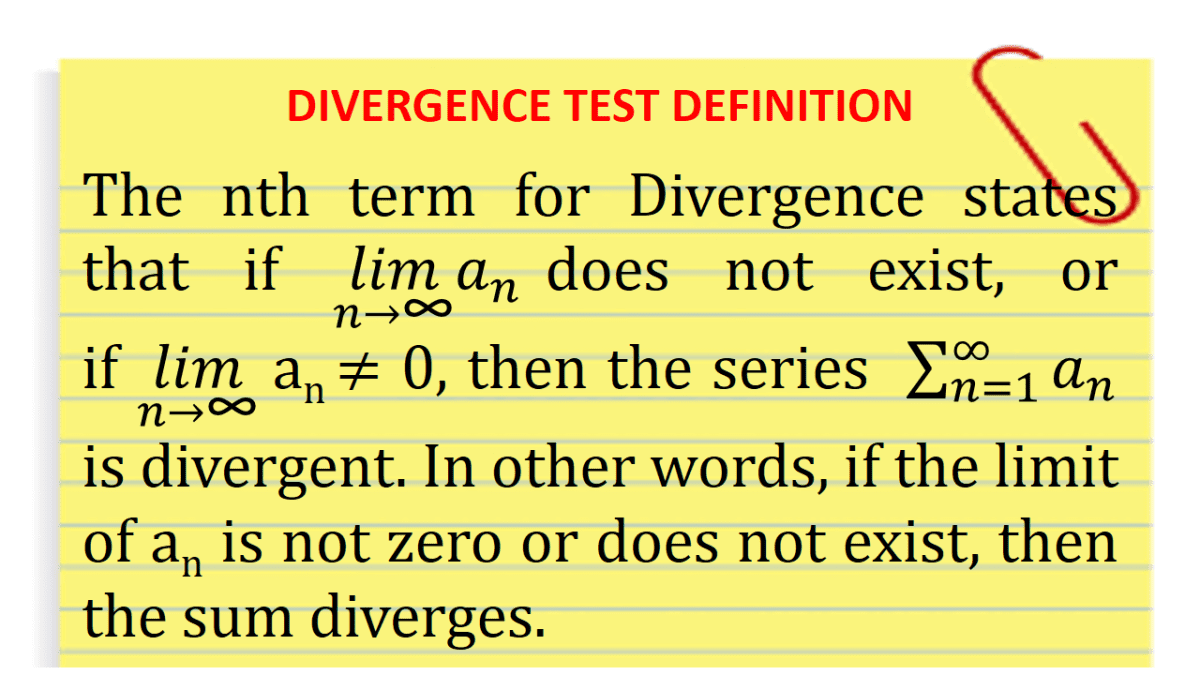

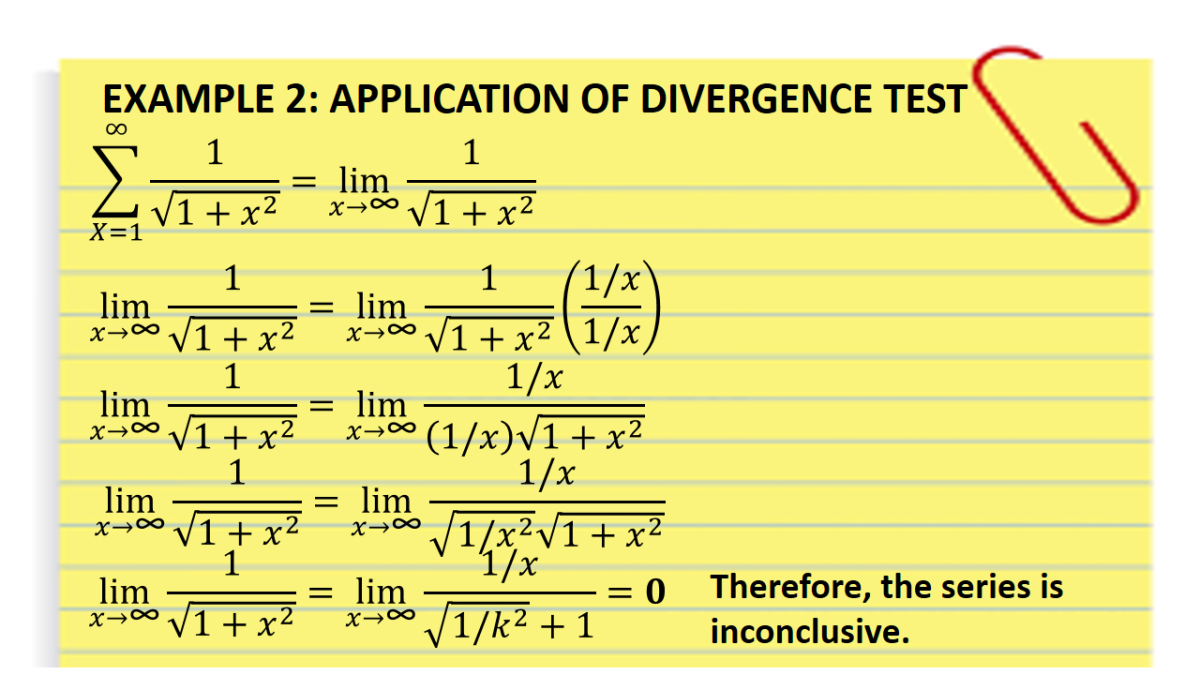

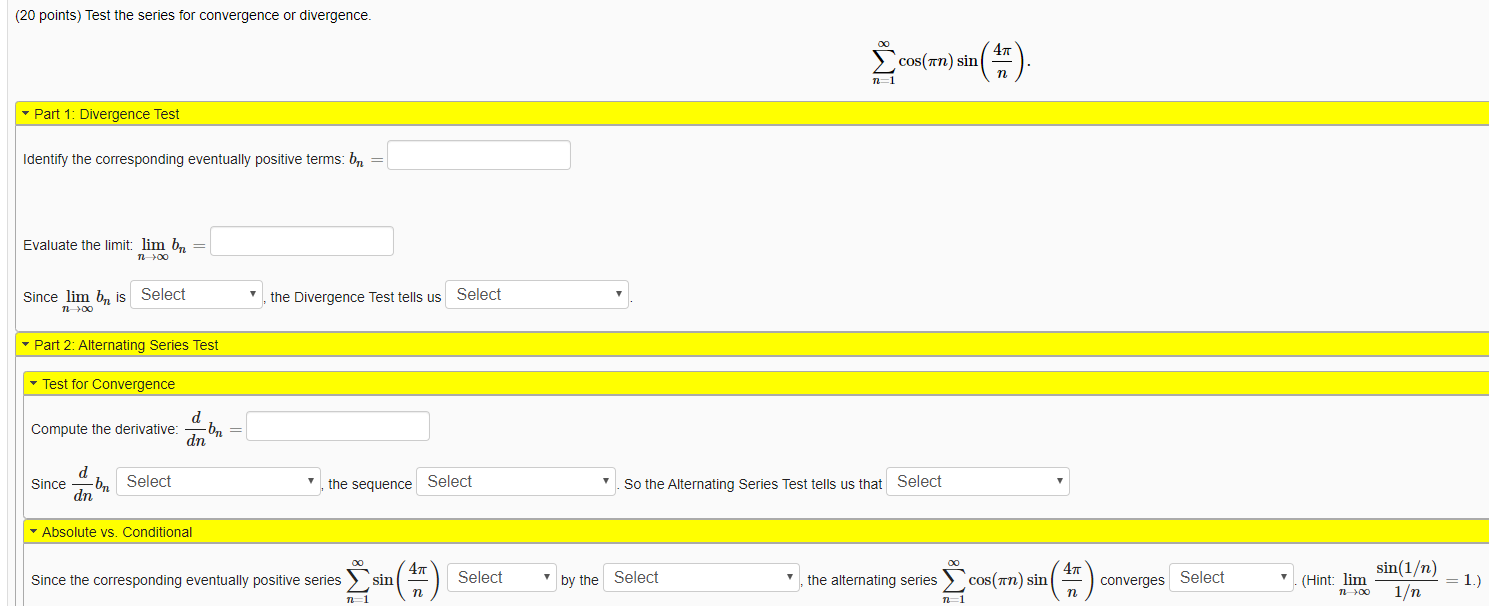

SOLVED: Test the series for convergence or divergence. Part 1: Divergence Test Identify the corresponding positive terms: bn 1/(8n+4) Evaluate the limit: lim bn as n approaches 0 Since lim bn is

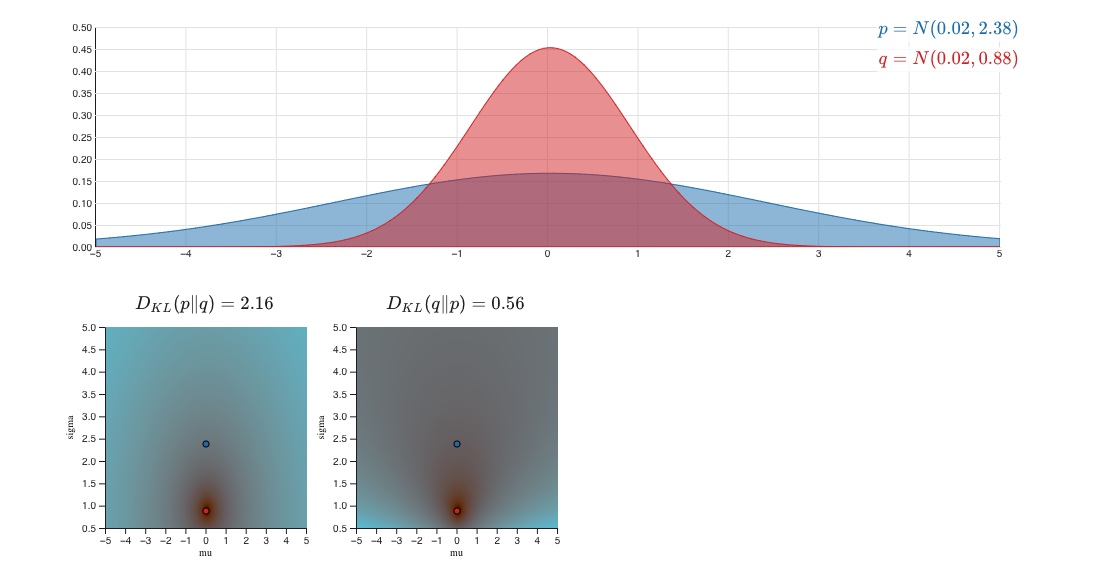

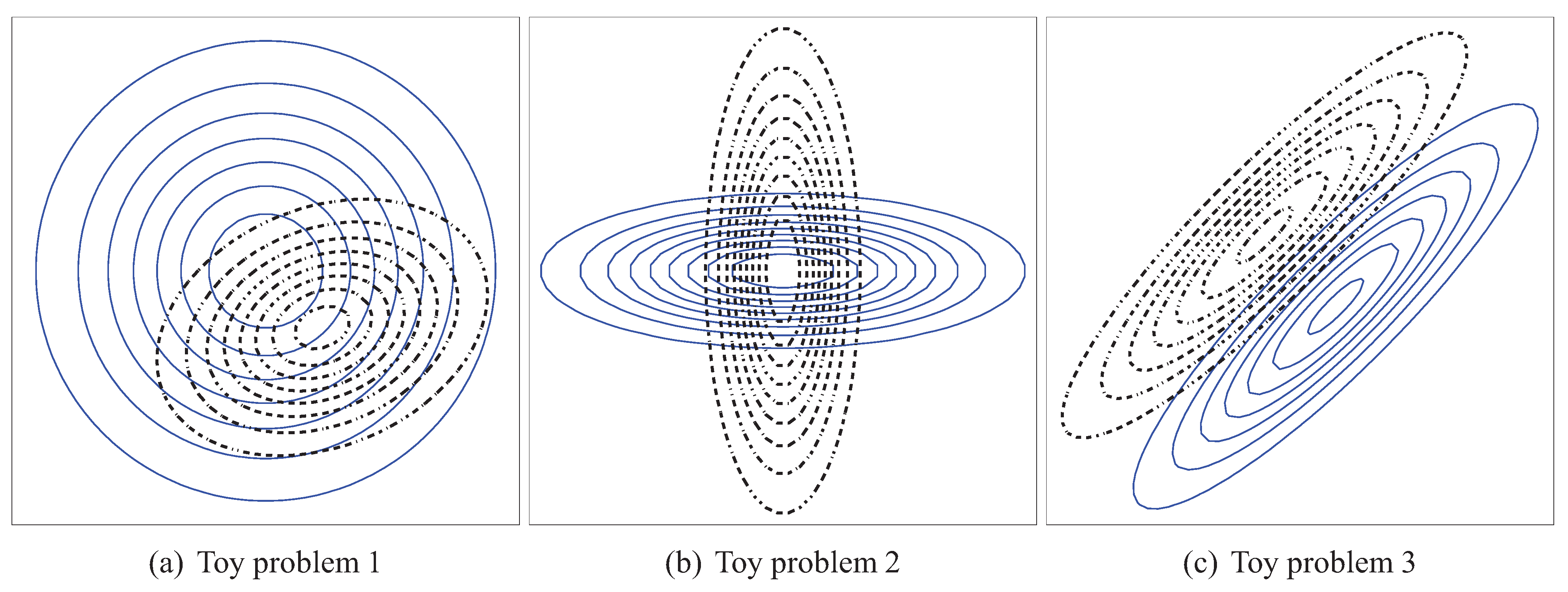

Entropy | Free Full-Text | On Accuracy of PDF Divergence Estimators and Their Applicability to Representative Data Sampling

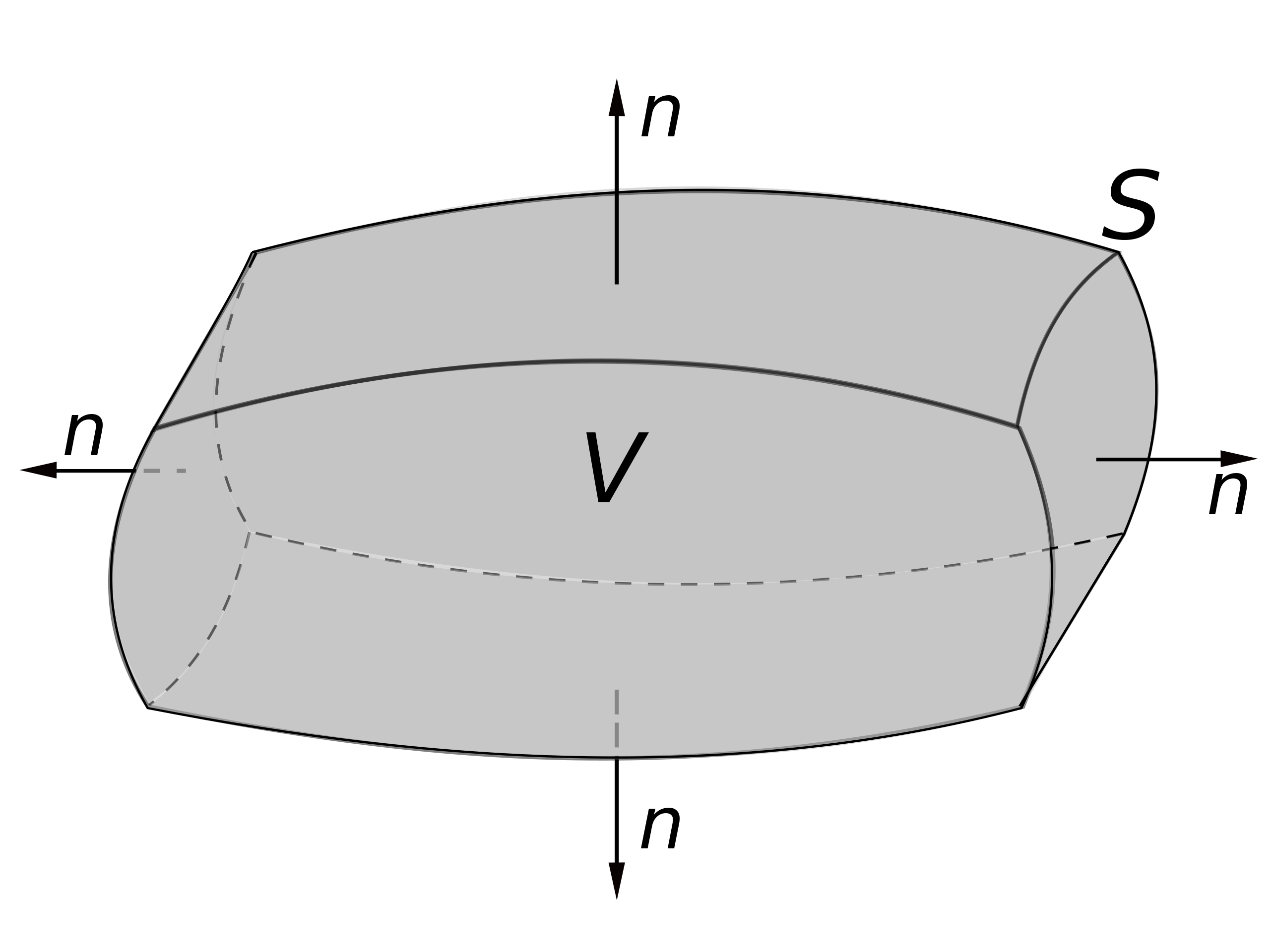

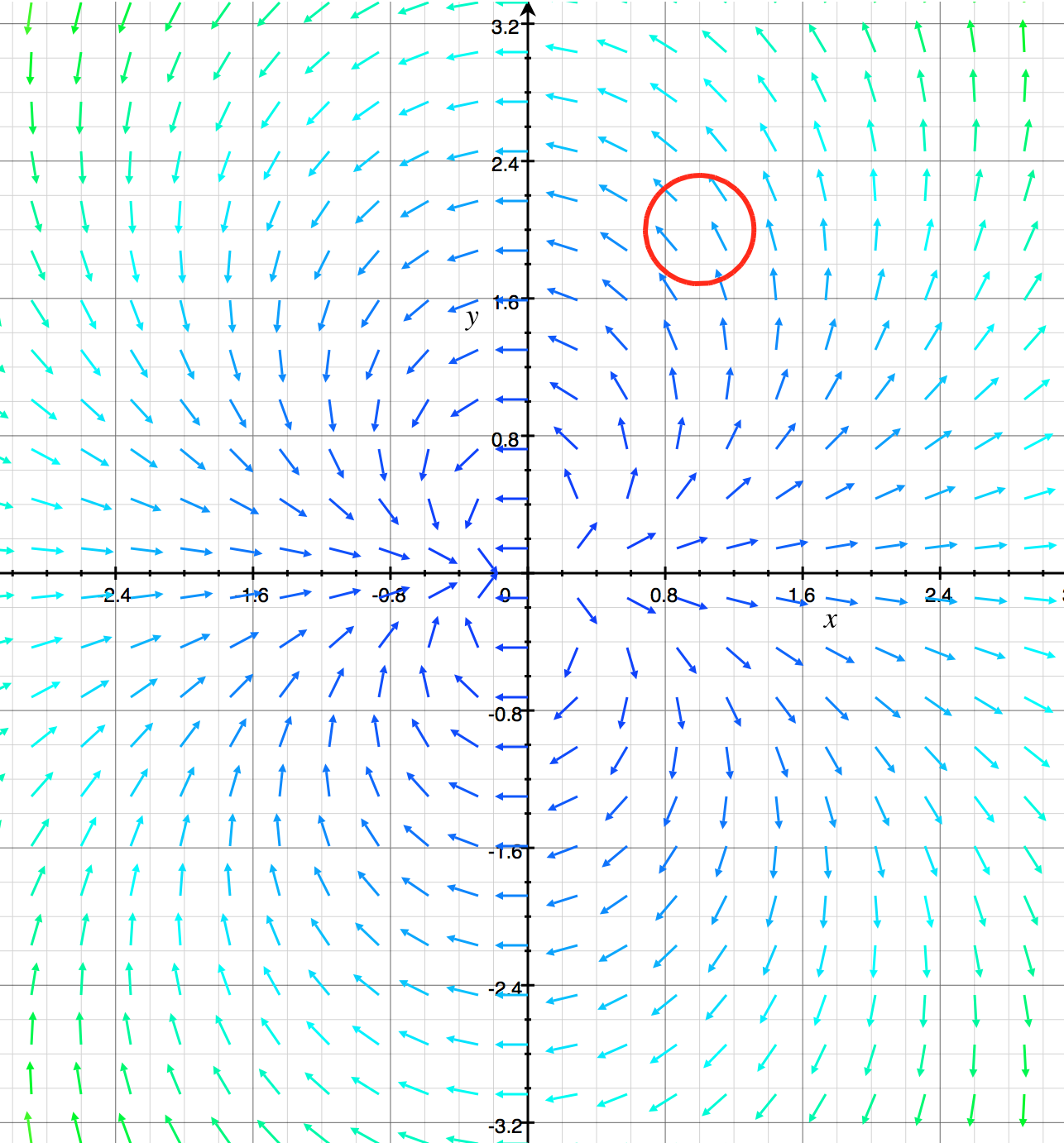

real analysis - Using the divergence theorem to prove that $\frac{1}{|B_R(0)|} \int_{B_R(0)} M \textbf{y} . \textbf{y} dy = \frac{R^2}{ n + 2} \text{trace}(M)$ - Mathematics Stack Exchange

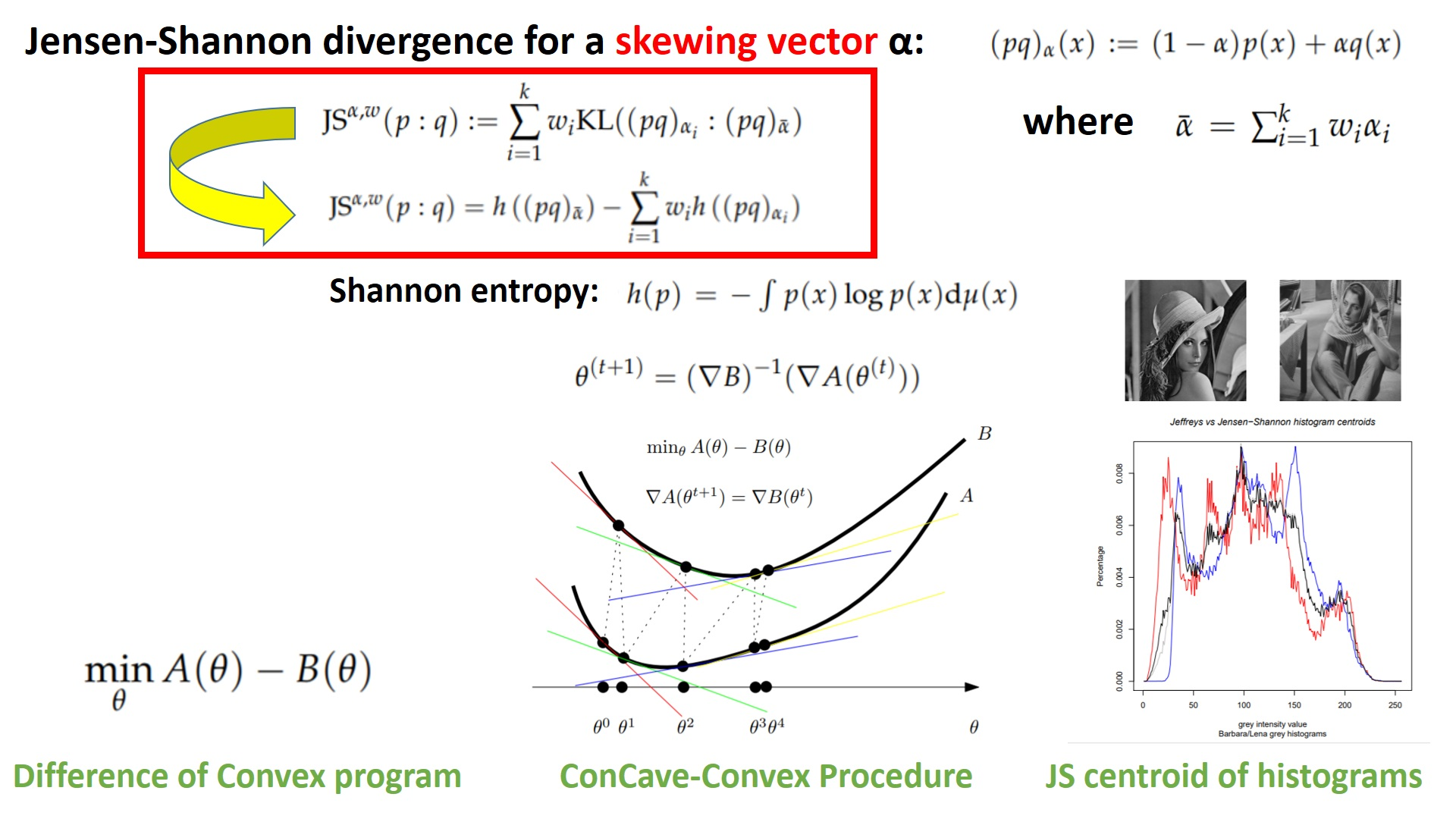

Entropy | Free Full-Text | On a Generalization of the Jensen–Shannon Divergence and the Jensen–Shannon Centroid

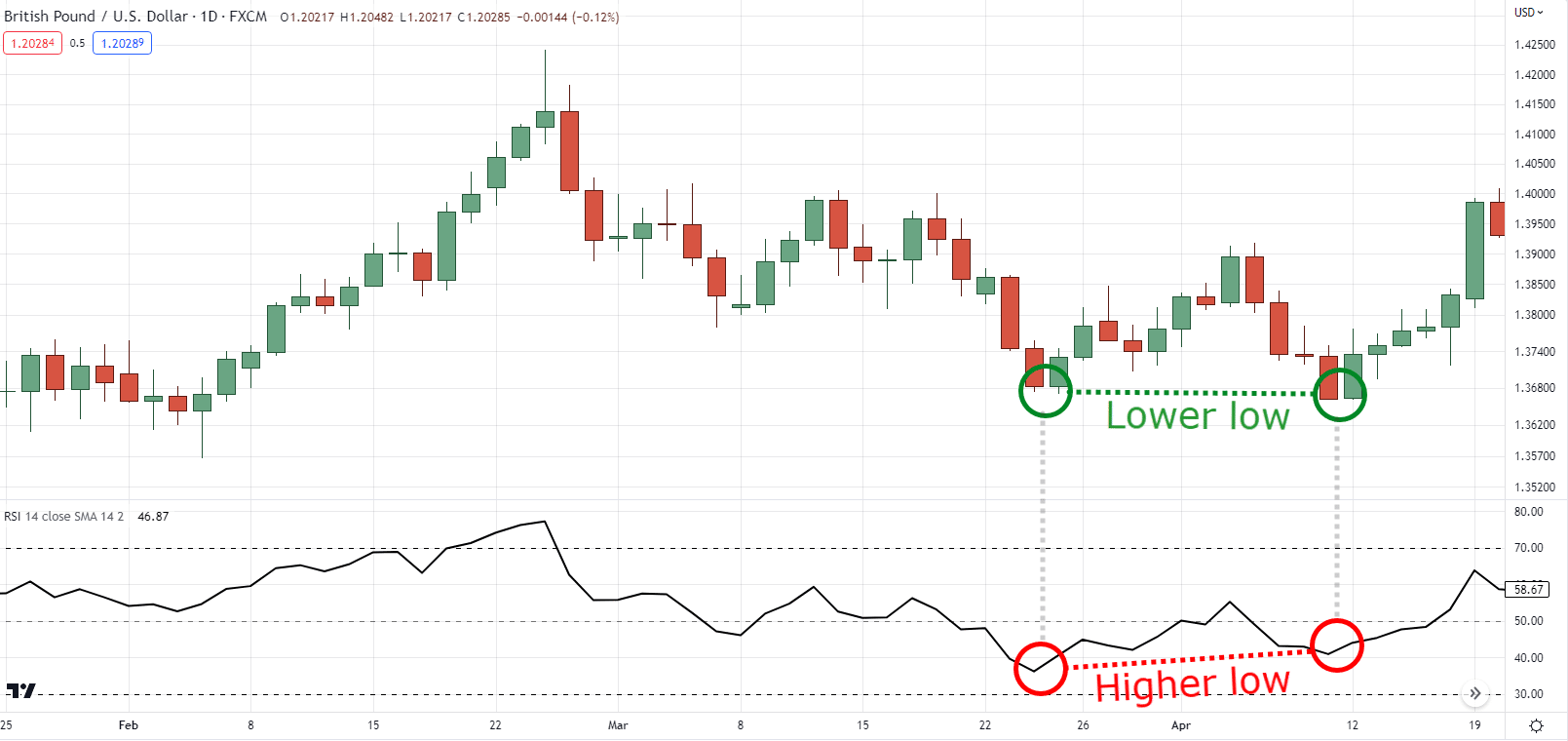

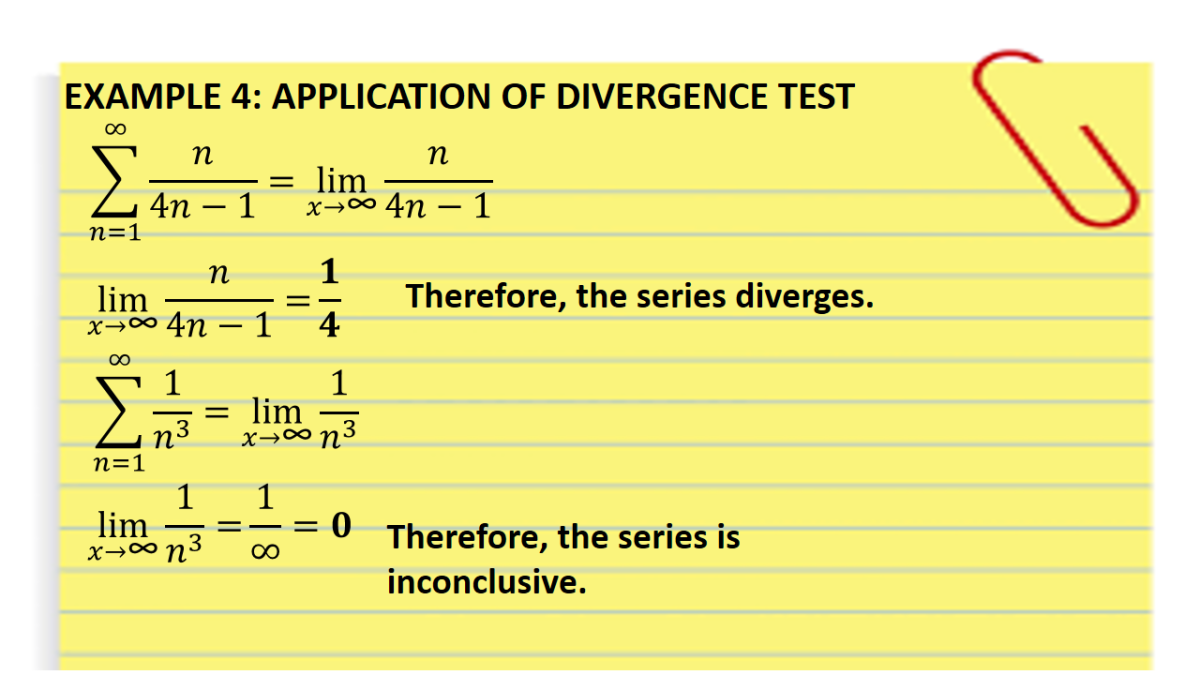

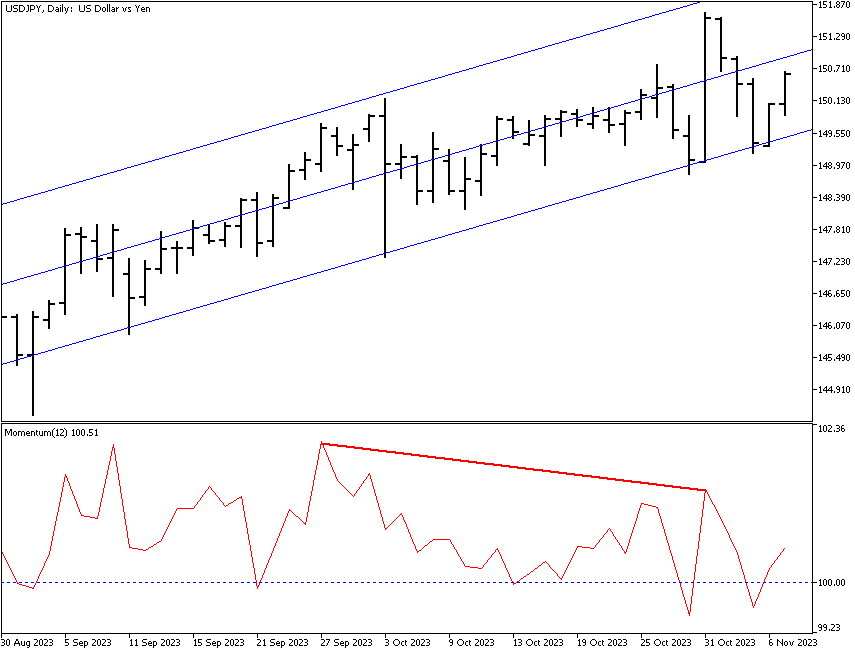

:max_bytes(150000):strip_icc()/dotdash_INV-final-Divergence-Definition-and-Uses-Apr-2021-01-41d9b314d3a645118a911367acce55a7.jpg)